Blog by Sumana Harihareswara, Changeset founder

Transparency And Accountability In Government Forensic Science

Hi, reader. I wrote this in 2017 and it's now more than five years old. So it may be very out of date; the world, and I, have changed a lot since I wrote it! I'm keeping this up for historical archive purposes, but the me of today may 100% disagree with what I said then. I rarely edit posts after publishing them, but if I do, I usually leave a note in italics to mark the edit and the reason. If this post is particularly offensive or breaches someone's privacy, please contact me.

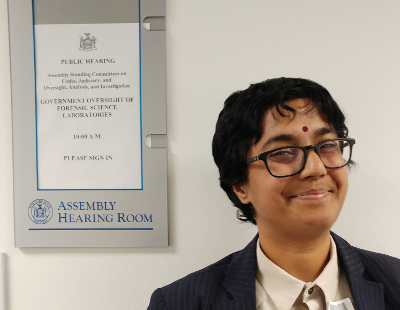

In February, I learned that the New York State Assembly was planning a public hearing on government oversight of forensic science laboratories, and then was invited to offer ten minutes of testimony and then answer legislators' questions. This was a hearing held jointly by the Assembly Standing Committees on Codes, on Judiciary, and on Oversight, Analysis and Investigation and it was my first time speaking in this sort of capacity. I spoke on the importance of auditability and transparency in software used in devices the government uses in laboratories and field tests, and open source as an approach to improve these. And I testified to the efficiency, cost savings, security, and quality gains available by using open source software and by reusing and sharing open source software with other state governments. Here's a PDF of my testimony as written, and video and audio recordings are available as is a transcript that includes answers to the legislators' questions. It is a thrilling feeling to see my own words in a government hearing transcript, in that typeface and with those line numbers!

In February, I learned that the New York State Assembly was planning a public hearing on government oversight of forensic science laboratories, and then was invited to offer ten minutes of testimony and then answer legislators' questions. This was a hearing held jointly by the Assembly Standing Committees on Codes, on Judiciary, and on Oversight, Analysis and Investigation and it was my first time speaking in this sort of capacity. I spoke on the importance of auditability and transparency in software used in devices the government uses in laboratories and field tests, and open source as an approach to improve these. And I testified to the efficiency, cost savings, security, and quality gains available by using open source software and by reusing and sharing open source software with other state governments. Here's a PDF of my testimony as written, and video and audio recordings are available as is a transcript that includes answers to the legislators' questions. It is a thrilling feeling to see my own words in a government hearing transcript, in that typeface and with those line numbers!

As I was researching my testimony, I got a lot of help from friends who introduced me to people who work in forensics or in this corner of the law. And I found an article by lawyer Rebecca Wexler on the danger of closed-source, unauditable code used in forensic science in the criminal justice system, and got the committee to also invite her to testify. Her testimony's also available in the recordings and transcript I link to above. And today she has a New York Times piece, "How Computers Are Harming Criminal Justice", which includes specific prescriptions:

Defense advocacy is a keystone of due process, not a business competition. And defense attorneys are officers of the court, not would-be thieves. In civil cases, trade secrets are often disclosed to opposing parties subject to a protective order. The same solution should work for those defending life or liberty.The Supreme Court is currently considering hearing a case, Wisconsin v. Loomis, that raises similar issues. If it hears the case, the court will have the opportunity to rule on whether it violates due process to sentence someone based on a risk-assessment instrument whose workings are protected as a trade secret. If the court declines the case or rules that this is constitutional, legislatures should step in and pass laws limiting trade-secret safeguards in criminal proceedings to a protective order and nothing more.

I'll add here something I said during the questions-and-answers with the legislators:

And talking about the need for source code review here, I'm going to speak here as a programmer and a manager. Every piece of software that's ever been written that's longer than just a couple of lines long, that actually does anything substantive, has bugs. It has defects. And if you want to write code that doesn't have defects or if you want to at least have an understanding of what the defects are so that you can manage them, so that you can oversight them (the same way that we have a system of democracy, right, of course there's going to be problems, but we have mechanisms of oversight) -- If in a system that's going to have defects, if we don't have any oversight, if we have no transparency into what those instructions are doing and to what the recipe is, not only are we guaranteed to have bugs; we're guaranteed to have bugs that are harder to track down. And given what we've heard earlier about the fact that it's very likely that in some of these cases there will be discriminatory impacts, I think it's even more important; this isn't just going to be random.I'll give you an example. HP, the computer manufacturer, they made a web camera, a camera built into a computer or a laptop that was supposed to automatically detect when there was a face. It didn't see black people's faces because they hadn't been tested on people with darker skin tones. Now at least that was somewhat easy to detect once it actually got out into the marketplace and HP had to absorb some laughter. But nobody's life was at stake, right?

When you're doing forensic work, of course in a state the size of New York State, edge cases, things that'll only happen under this combination of combination of conditions are going to happen every Tuesday, aren't they? And the way that the new generation of probabilistic DNA genotyping and other more complex bits of software work, it's not just: Okay, now much of fluid X is in sample Y? It's running a zillion different simulations based on different ideas of how the world could be. Maybe you've heard like the butterfly effect? If one little thing is off, you know, we might get a hurricane.

Comments

Brendan

13 Jun 2017, 16:29 p.m.

mms

13 Jun 2017, 19:11 p.m.

I will not say mean things about other people's proprietary software in public. I also am inclined to agree with both you and Ms. Wexler, despite the impression that by my profession I would be opposed.

Again, thank you for taking the time to talk to actual practitioners (in NYS, even!) before testifying.

THIS IS SO COOL